Java Virtual Threads made Easy

Ditching heavy OS threads: How Java 21 lets you cheat the system with millions of lightweight virtual threads.

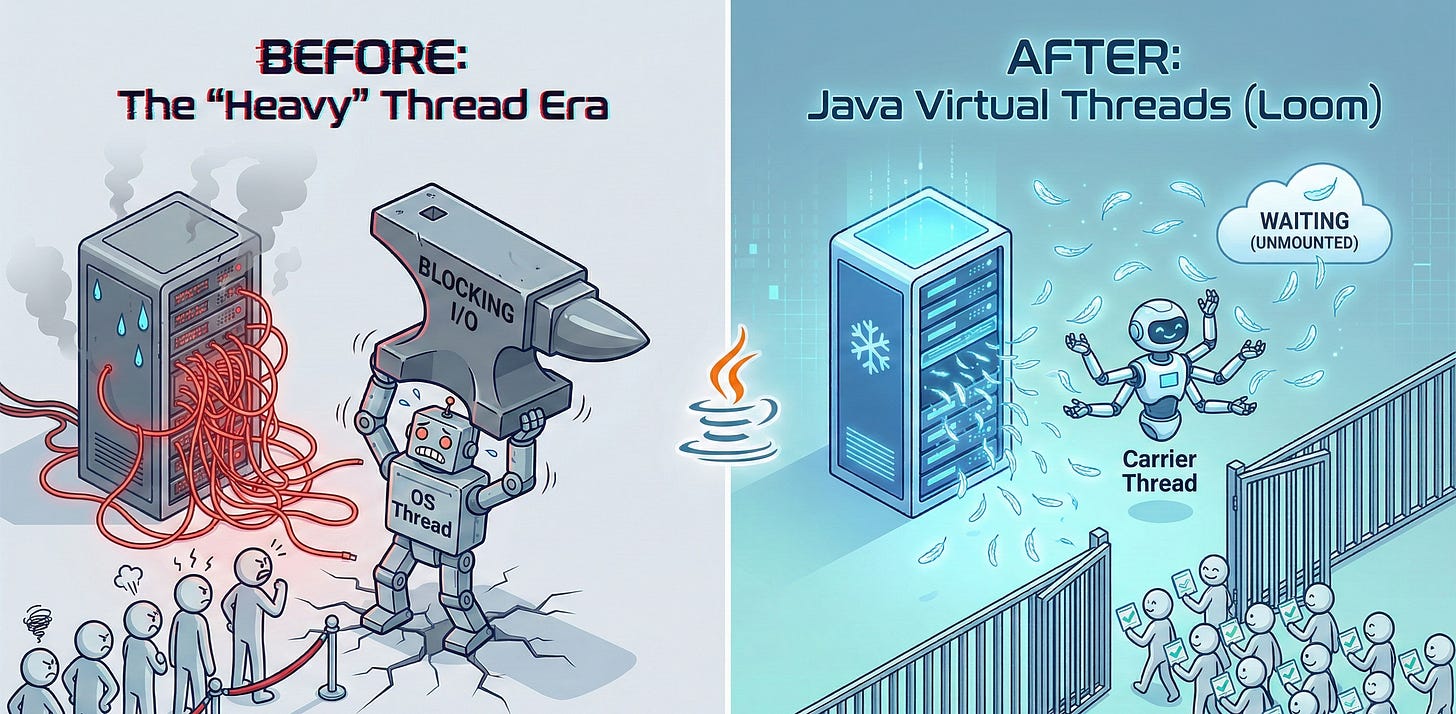

Remember the “bad old days” of Java concurrency?

Picture this: It’s 2015. You need your backend service to handle 50,000 concurrent WebSocket connections. You naively try to spin up a new Thread() for each one.

Your server immediately catches fire, melts through the rack, and sends you a slack message saying: OutOfMemoryError: unable to create new native thread.

We’ve all been there.

For years, Java developers have been stuck between a rock and a hard place when it comes to high scalability.

The Rock (The “Thread-per-Request” model): It’s easy to understand. Code executes top-to-bottom. If you need to call a database, you block and wait. But OS threads are heavy. They are expensive to create and eat up megabytes of RAM just sitting there doing nothing. You hit a ceiling fast.

The Hard Place (Reactive Programming (RxJava, WebFlux, Vert.x)): To bypass the thread limit, we started writing asynchronous, non-blocking code. It scaled beautifully. It also made our codebases look like a plate of spaghetti that had been dropped on the floor. Debugging a reactive stack trace is a form of cruel and unusual punishment.

Enter Java 21 and Project Loom. Enter Virtual Threads.

They promise the holy grail: The simplicity of the blocking “thread-per-request” style, but with the scalability of reactive programming.

But what are they, really? Let’s break it down without needing a PhD in JVM internals.

The “Diet Coke” of Threads

To understand virtual threads, I found it helpful to listen in on a conversation between Sarah (a grizzled veteran backend lead) and Mike (an enthusiastic mid-level dev) at the office coffee machine.

Mike: “I keep hearing about Project Loom and these virtual threads in Java 21. Are they, like, fake threads? Do they run in the metaverse?”

Sarah: (Laughs) “No, Mike. They are very real. Think of them as ‘Diet Threads’. All the concurrency flavor of a regular thread, with none of the operating system calories.”

Mike: “Calories?”

Sarah: “Resource heaviness. A traditional Java thread—what we now call a ‘Platform Thread’—is just a thin wrapper around an actual operating system thread. The OS manages it. They take time to boot up, and they reserve a big chunk of memory for their stack, usually around 1MB, whether they use it or not. If you want a million of them, you need terabytes of RAM just for stacks.”

Mike: “Okay, so how are virtual threads different?”

Sarah: “A virtual thread is managed entirely by the Java Virtual Machine (JVM), not the OS. They are incredibly lightweight object structures in the heap. Their stacks grow and shrink dynamically. You can create millions of them on a standard laptop, and the JVM barely blinks.”

“Think of platform threads as expensive corporate apartments you have to lease for a year. Think of virtual threads as cheap Airbnb rooms you rent by the hour. You can afford way more Airbnbs.”

The Magic Trick: How They Work

The secret sauce of virtual threads isn’t actually magic; it’s a clever bit of scheduling reorganization.

In the old world, if you had 8 CPU cores, you probably had a thread pool with maybe 16 or 32 platform threads actively running. If one of those threads made a Database call, it stopped. It blocked. The OS thread just sat there, filing its nails, waiting for the DB to respond. A total waste of an expensive resource.

Virtual threads change this mapping.

We still have platform threads (now called Carrier Threads in this context), but only a few of them—usually equal to the number of CPU cores available.

The JVM then schedules massive amounts of virtual threads onto these few carrier threads.

The “Waitstaff” Analogy

Imagine a busy restaurant kitchen.

The Chefs are your CPU cores.

The Waiters are the Platform (OS) Threads.

The Customers are the tasks you want to run (Virtual Threads).

In the old model, a waiter takes an order to a table, and stands there waiting while the customer slowly decides what they want, then waits while they eat, just in case they need ketchup. That waiter can serve only one table at a time.

In the Virtual Thread model, the waiter (Carrier Thread) takes the customer’s order. The moment the customer says, “Hmm, let me think about the wine...” (a blocking I/O operation), the waiter immediately leaves that table and goes to serve another customer.

When the first customer is finally ready to order, they raise their hand. The next available waiter swoops in and picks up right where they left off.

When a virtual thread blocks (e.g., waits for an HTTP response), the JVM performs a magic trick: it “unmounts” the virtual thread from the carrier thread, parks it in heap memory, and lets the expensive carrier thread go do something else.

When the data arrives, the JVM revives the virtual thread and pins it back onto a carrier thread to finish its job.

Show Me The Code

The best part about Project Loom is how boring the code looks. You don’t need Mono<T>, Flux<T>, or complex .flatMap() chains.

Here is the “old school” way that melts servers:

// The old way: Heavy Platform Threads

for (int i = 0; i < 100_000; i++) {

new Thread(() -> {

try {

// Simulate a blocking operation (like a DB call)

Thread.sleep(1000);

} catch (InterruptedException e) {

throw new RuntimeException(e);

}

}).start();

}

// Result on most machines: OutOfMemoryError or system crash.

Here is the Java 21 Virtual Thread way:

// The new way: Lightweight Virtual Threads

// Using an Executor (recommended for structured concurrency)

try (var executor = Executors.newVirtualThreadPerTaskExecutor()) {

for (int i = 0; i < 100_000; i++) {

executor.submit(() -> {

try {

// Simulate blocking. The JVM handles this efficiently now!

Thread.sleep(1000);

System.out.println("Task finished on: " + Thread.currentThread());

} catch (InterruptedException e) {

throw new RuntimeException(e);

}

});

}

} // The try-with-resources waits for all tasks to finish here.

// Result: It just works. In about 1 second.

If you run that second block of code, you’ll notice the thread names look something like this:

VirtualThread[#21027]/runnable@ForkJoinPool-1-worker-3

It tells you it’s a virtual thread, but it’s currently hitching a ride on a carrier platform thread inside the ForkJoinPool.

The Warning Labels (Gotchas)

Before you go refactoring your entire codebase, Sarah the Senior Dev has a few warnings. Virtual threads are amazing, but they are not a drop-in replacement for everything.

1. Stop Pooling!

We are conditioned like Pavlov’s dogs to pool threads because they are expensive.

Sarah: “Mike, if I catch you creating a

VirtualThreadpool, you’re fired. Pooling virtual threads is like buying 10,000 plastic forks for a picnic and then insisting on washing them all afterward. They are disposable. Use them once and throw them away. Just useExecutors.newVirtualThreadPerTaskExecutor().”

2. The “Pinning” Problem

Remember the magic trick where the JVM unmounts a blocked virtual thread? There is kryptonite that stops this from working: Pinning.

If a virtual thread encounters a blocking operation while it is inside a synchronized block or method, or executing a native method (JNI), it gets “pinned” to the carrier thread. The JVM cannot unmount it.

If you pin a virtual thread and then block, you are back to holding hostage an OS thread.

The fix: If you are updating old code, try to replace synchronized with ReentrantLock where possible. The Loom team is working on fixing the synchronized issue in future Java releases, but for now, be aware of it.

3. Not for CPU Tasks

Virtual threads are optimized for I/O-bound tasks (waiting for databases, APIs, files). They are not magic turbo buttons for CPU-bound tasks (video encoding, massive mathematical calculations).

If you have 8 cores and 8 heavy CPU tasks, 8 platform threads are perfect. Throwing 10,000 virtual threads at CPU tasks will just add scheduling overhead and slow things down.

Conclusion

Java Virtual Threads are perhaps the most significant change to the language since lambdas in Java 8.

They allow us to return to the simple, readable, sequential programming style we love, while unlocking the massive scalability required by modern cloud applications.

Blocking is no longer a dirty word. It’s okay to wait again. The JVM has your back.